新闻

新闻

Unlearned but Not Forgotten: Data Extraction after Exact Unlearning in LLM

arXiv:2505.24379v3 Announce Type: replace-cross Abstract: Large Language Models are typically trained on datasets collected from...

Universal Adversarial Suffixes Using Calibrated Gumbel-Softmax Relaxation

arXiv:2512.08123v1 Announce Type: new Abstract: Language models (LMs) are often used as zero-shot or few-shot...

Unique Hard Attention: A Tale of Two Sides

arXiv:2503.14615v2 Announce Type: replace-cross Abstract: Understanding the expressive power of transformers has recently attracted attention...

Unifying Symbolic Music Arrangement: Track-Aware Reconstruction and Structured Tokenization

arXiv:2408.15176v4 Announce Type: replace-cross Abstract: We present a unified framework for automatic multitrack music arrangement...

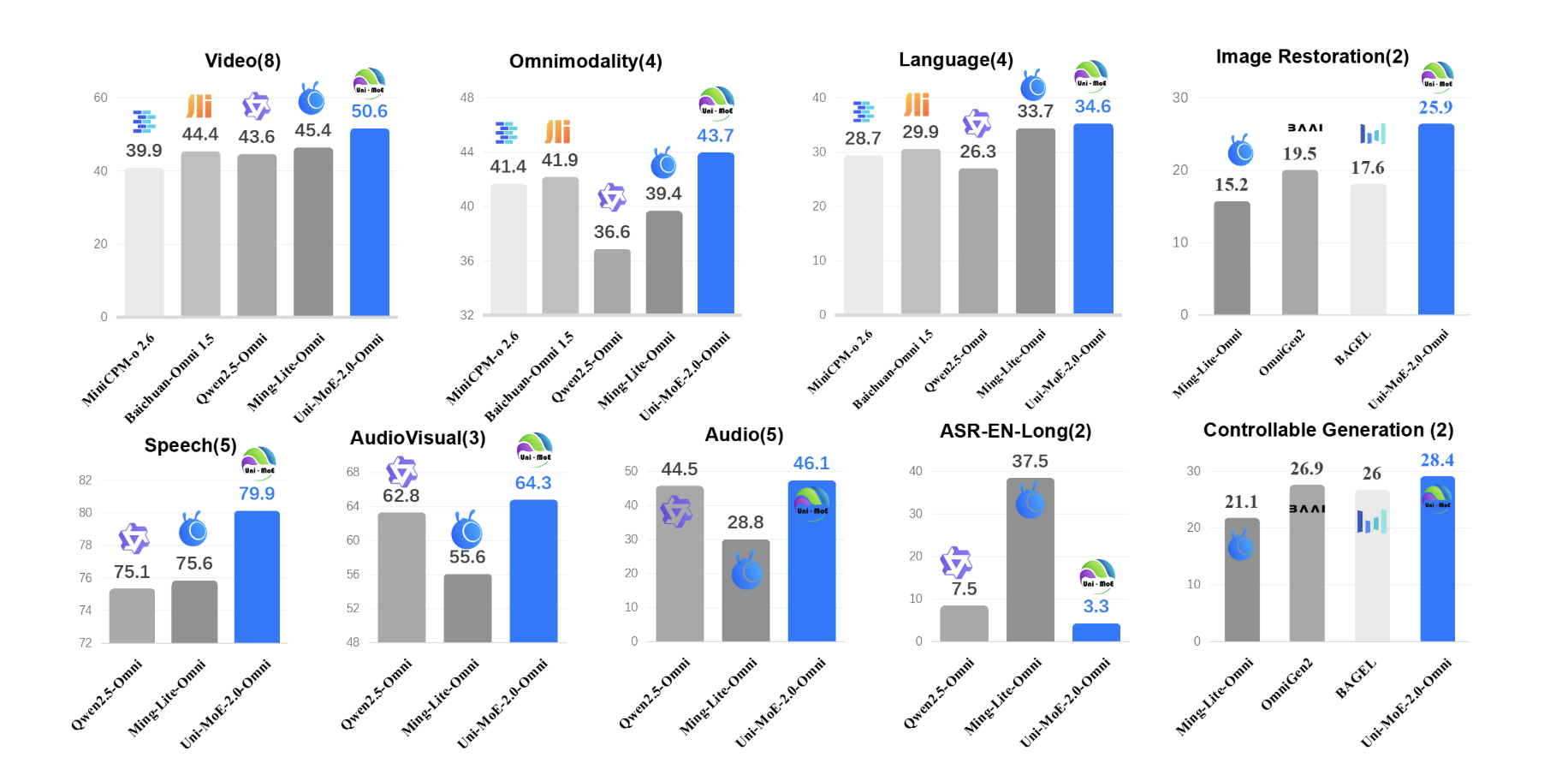

Uni-MoE-2.0-Omni: An Open Qwen2.5-7B Based Omnimodal MoE for Text, Image, Audio and Video Understanding

How do you build one open model that can reliably understand text, images, audio and...

Understanding the modern cybercrime landscape

Throughout 2025, HPE observed significant changes in how cybercriminals operate. Analyzing real-world threats, our HPE...

Understanding QA generation: Extracting Parametric and Contextual Knowledge with CQA for Low Resource Bangla Language

arXiv:2602.01451v1 Announce Type: new Abstract: Question-Answering (QA) models for low-resource languages like Bangla face challenges...

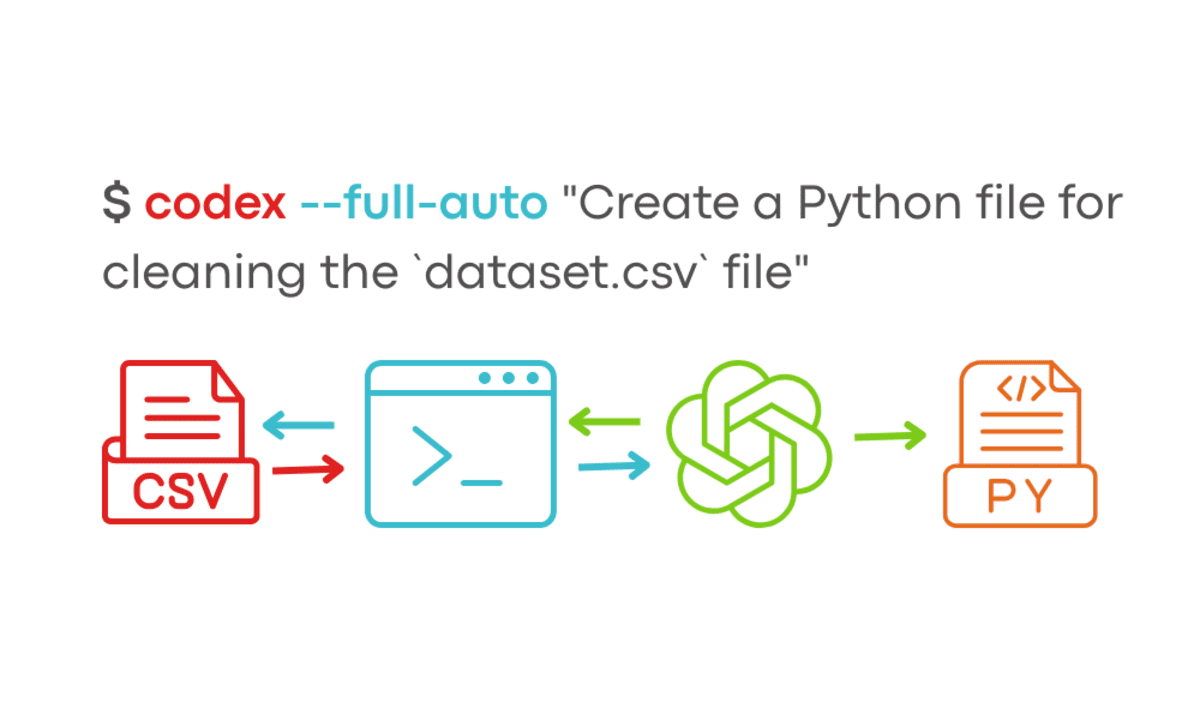

Understanding OpenAI Codex CLI Commands

We have seen a new era of agentic IDEs like Windsurf and Cursor AI...

Understanding In-context Learning of Addition via Activation Subspaces

arXiv:2505.05145v2 Announce Type: replace-cross Abstract: To perform in-context learning, language models must extract signals from...

Under the Influence: Quantifying Persuasion and Vigilance in Large Language Models

arXiv:2602.21262v2 Announce Type: replace Abstract: With increasing integration of Large Language Models (LLMs) into areas...

Uncertainty-Aware Budget Allocation for Adaptive Test-Time Reasoning

arXiv:2605.26849v1 Announce Type: new Abstract: Sampling multiple responses improves language model reasoning, but uniform compute...

Uncertainty Quantification for Language Models: A Suite of Black-Box, White-Box, LLM Judge, and Ensemble Scorers

arXiv:2504.19254v4 Announce Type: replace Abstract: Hallucinations are a persistent problem with Large Language Models (LLMs)...