Table of contents

- The Growing Role of AI in Biomedical Research

- The Core Challenge: Matching Expert-Level Reasoning

- Why Traditional Approaches Fall Short

- Biomni-R0: A New Paradigm Using Reinforcement Learning

- Training Strategy and System Design

- Results That Outperform Frontier Models

- Designing for Scalability and Precision

- Key Takeaways from the research include:

The Growing Role of AI in Biomedical Research

The field of biomedical artificial intelligence is evolving rapidly, with increasing demand for agents capable of performing tasks that span genomics, clinical diagnostics, and molecular biology. These agents aren’t merely designed to retrieve facts; they are expected to reason through complex biological problems, interpret patient data, and extract meaningful insights from vast biomedical databases. Unlike general-purpose AI models, biomedical agents must interface with domain-specific tools, comprehend biological hierarchies, and simulate workflows similar to those of researchers to effectively support modern biomedical research.

The Core Challenge: Matching Expert-Level Reasoning

However, achieving expert-level performance in these tasks is far from trivial. Most large language models fall short when dealing with the nuance and depth of biomedical reasoning. They may succeed on surface-level retrieval or pattern recognition tasks, but often fail when challenged with multi-step reasoning, rare disease diagnosis, or gene prioritization, areas that require not just data access, but contextual understanding and domain-specific judgment. This limitation has created a clear gap: how to train biomedical AI agents that can think and act like domain experts.

Why Traditional Approaches Fall Short

While some solutions leverage supervised learning on curated biomedical datasets or retrieval-augmented generation to ground responses in literature or databases, these approaches have drawbacks. They often rely on static prompts and pre-defined behaviors that lack adaptability. Furthermore, many of these agents struggle to effectively execute external tools, and their reasoning chains collapse when faced with unfamiliar biomedical structures. This fragility makes them ill-suited for dynamic or high-stakes environments, where interpretability and accuracy are non-negotiable.

Biomni-R0: A New Paradigm Using Reinforcement Learning

Researchers from Stanford University and UC Berkeley introduced a new family of models called Biomni-R0, built by applying reinforcement learning (RL) to a biomedical agent foundation. These models, Biomni-R0-8B and Biomni-R0-32B, were trained in an RL environment specifically tailored for biomedical reasoning, using both expert-annotated tasks and a novel reward structure. The collaboration combines Stanford’s Biomni agent and environment platform with UC Berkeley’s SkyRL reinforcement learning infrastructure, aiming to push biomedical agents past human-level capabilities.

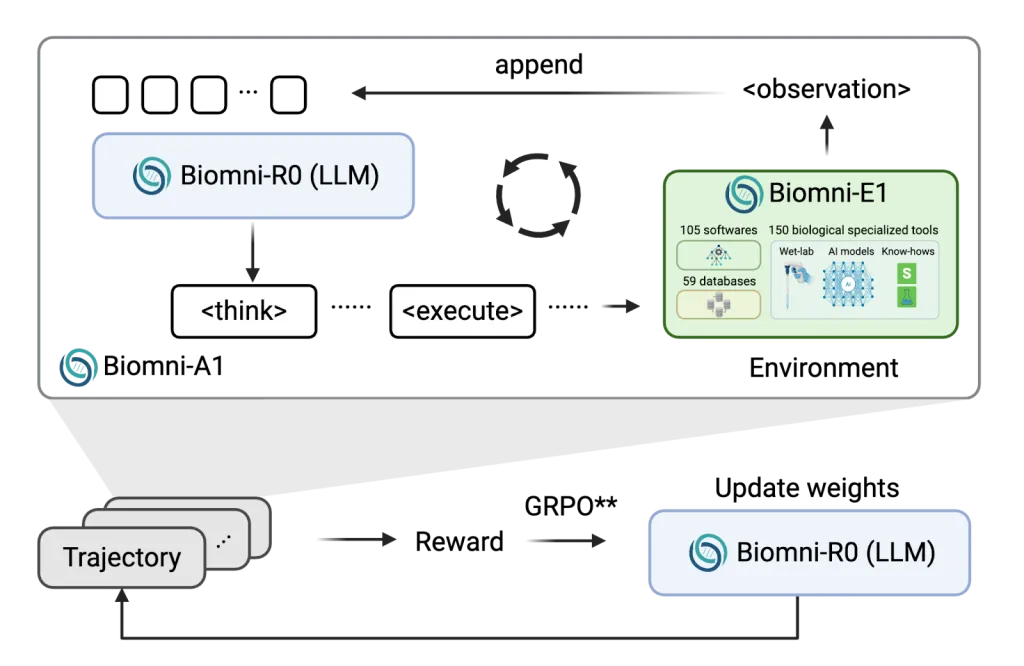

Training Strategy and System Design

The research introduced a two-phase training process. First, they used supervised fine-tuning (SFT) on high-quality trajectories sampled from Claude-4 Sonnet using rejection sampling, effectively bootstrapping the agent’s ability to follow structured reasoning formats. Next, they fine-tuned the models using reinforcement learning, optimizing for two kinds of rewards: one for correctness (e.g., selecting the right gene or diagnosis), and another for response formatting (e.g., using structured <think> and <answer> tags correctly).

To ensure computational efficiency, the team developed asynchronous rollout scheduling that minimized bottlenecks caused by external tool delays. They also expanded the context length to 64k tokens, allowing the agent to manage long multi-step reasoning conversations effectively.

Results That Outperform Frontier Models

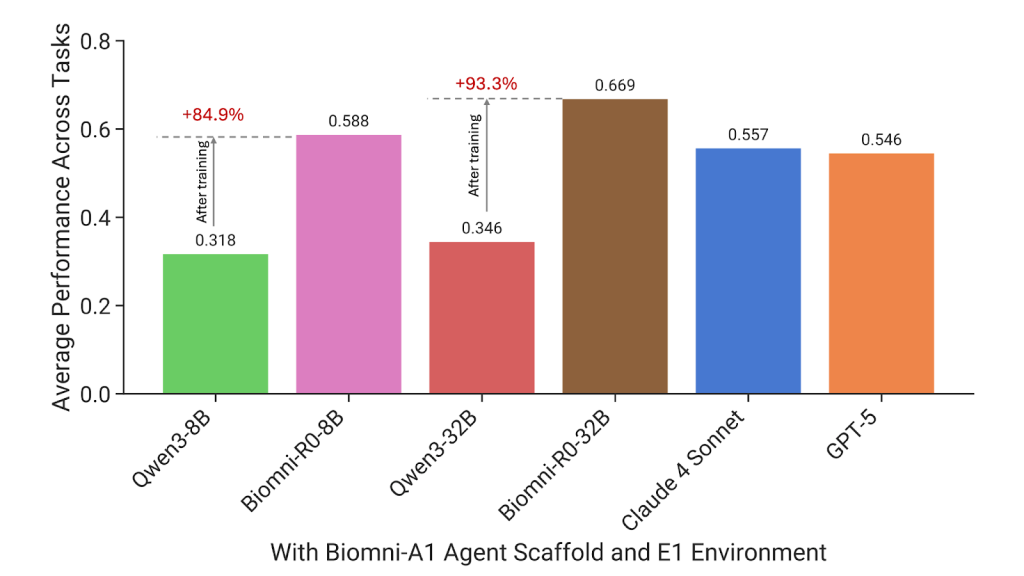

The performance gains were significant. Biomni-R0-32B achieved a score of 0.669, a jump from the base model’s 0.346. Even Biomni-R0-8B, the smaller version, scored 0.588, outperforming general-purpose models like Claude 4 Sonnet and GPT-5, which are both much larger. On a task-by-task basis, Biomni-R0-32B scored highest on 7 out of 10 tasks, while GPT-5 led in 2, and Claude 4 in just 1. One of the most striking results was in rare disease diagnosis, where Biomni-R0-32B reached 0.67, compared to Qwen-32B’s 0.03, a more than 20× improvement. Similarly, in GWAS variant prioritization, the model’s score increased from 0.16 to 0.74, demonstrating the value of domain-specific reasoning.

Designing for Scalability and Precision

Training large biomedical agents requires dealing with resource-heavy rollouts involving external tool execution, database queries, and code evaluation. To manage this, the system decoupled environment execution from model inference, allowing more flexible scaling and reducing idle GPU time. This innovation ensured efficient use of resources, even with tools that had varying execution latencies. Longer reasoning sequences also proved beneficial. The RL-trained models consistently produced lengthier, structured responses, which strongly correlated with better performance, highlighting that depth and structure in reasoning are key indicators of expert-level understanding in biomedicine.

Key Takeaways from the research include:

- Biomedical agents must perform deep reasoning, not just retrieval, across genomics, diagnostics, and molecular biology.

- The central problem is achieving expert-level task performance, mainly in complex areas such as rare diseases and gene prioritization.

- Traditional methods, including supervised fine-tuning and retrieval-based models, often fall short in terms of robustness and adaptability.

- Biomni-R0, developed by Stanford and UC Berkeley, uses reinforcement learning with expert-based rewards and structured output formatting.

- The two-phase training pipeline, SFT followed by RL, proved highly effective in optimizing performance and reasoning quality.

- Biomni-R0-8B delivers strong results with a smaller architecture, while Biomni-R0-32B sets new benchmarks, outperforming Claude 4 and GPT-5 on 7 of 10 tasks.

- Reinforcement learning enabled the agent to generate longer, more coherent reasoning traces, a key trait of expert behavior.

- This work lays the foundation for super-expert biomedical agents, capable of automating complex research workflows with precision.

Check out the Technical details. Feel free to check out our GitHub Page for Tutorials, Codes and Notebooks. Also, feel free to follow us on Twitter and don’t forget to join our 100k+ ML SubReddit and Subscribe to our Newsletter.

The post Biomni-R0: New Agentic LLMs Trained End-to-End with Multi-Turn Reinforcement Learning for Expert-Level Intelligence in Biomedical Research appeared first on MarkTechPost.